MontyCloud's MSP customers needed to answer WAFR questions at a granular level to claim $5,000 AWS credits — but no interface existed for it. I designed the first question-level tracking interface in a 14-day sprint. The feature made the workflow possible; the UX decisions made it usable.

Outcome: Before this shipped, no MSP had claimed credits through the platform — the workflow didn't exist. Within 6 months, 16 had used it to do so.

Decisions at a Glance

| Decision | What I chose | What I rejected | Why |

|---|---|---|---|

| Risk filtering | Clickable summary counts that filter the question list | Separate filter dropdown controls | Connecting summary to list removed a manual scanning step — identification time dropped from 8-12 min to under 1 min |

| Status indicators | Semantic icons (clock, x-mark, checkmark, dash) | Color-coded dots (red/green/gray) | Red-green color blindness affects ~8% of male users — shape communicates status without relying on color |

| Scope for 14-day sprint | Ship tracking + filtering, cut historical progress view | Build everything including timeline tracking | Data infrastructure for cross-run tracking didn't exist; shipping core workflow first let MSPs start claiming credits immediately |

| Validation approach | PM feedback + competitive research (nOps, AWS native) | Direct MSP user testing | Time constraint. In hindsight, even 2-3 MSP sessions would have caught edge cases earlier |

What existed before

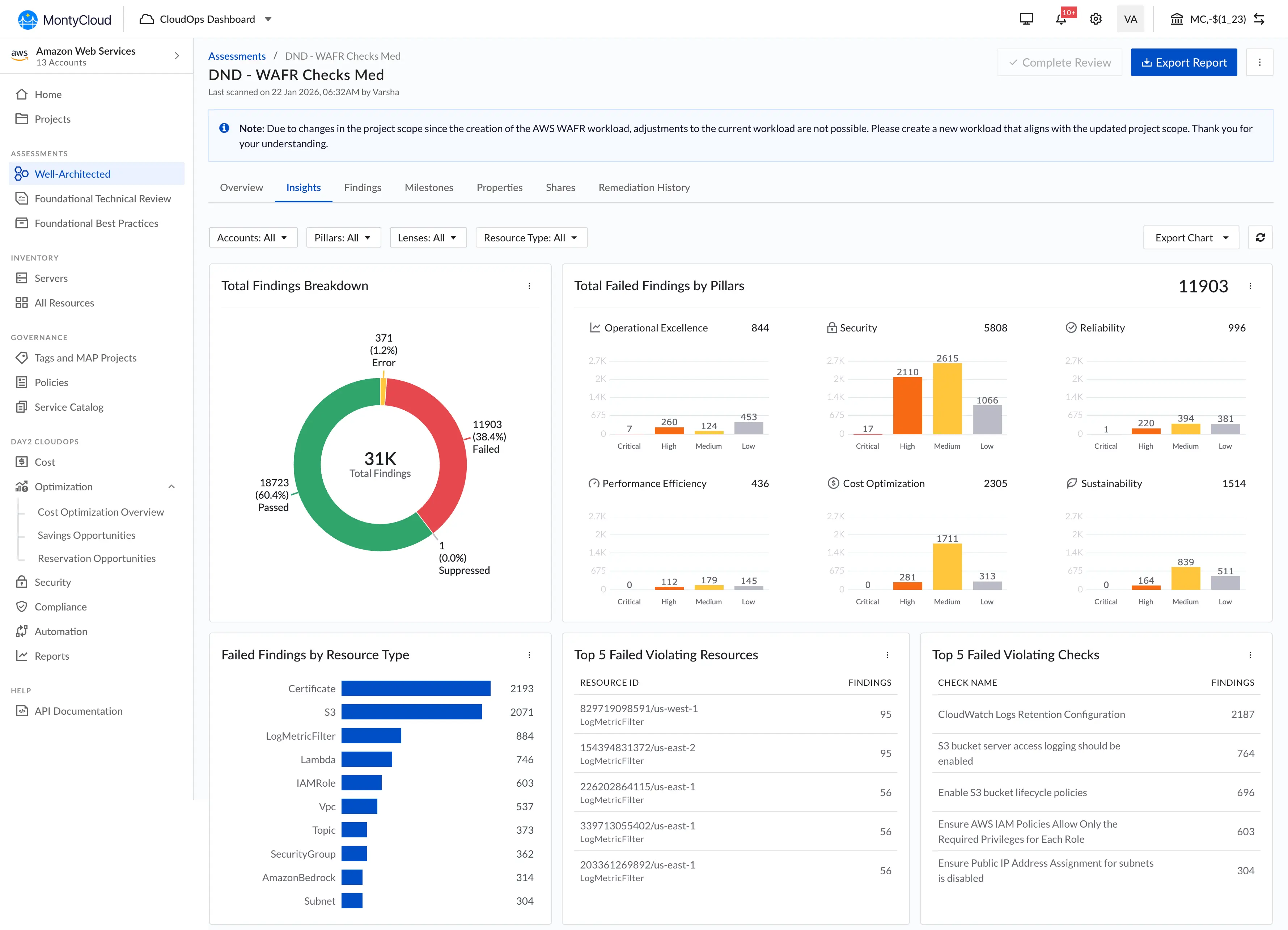

MontyCloud had WAFR check execution, but the interface was minimal:

- A basic table view of checks

- Summary stats showing High Risk and Medium Risk counts

- Findings donut charts (Passed/Failed/Error)

- No filtering, no drill-down, no question-level tracking

- No progress visibility ("How far along am I?")

MSPs were manually tracking question status in spreadsheets. It took 8-12 minutes just to identify which issues were high-risk. Zero MSPs had successfully claimed AWS credits through the platform.

How this started

PM came to me with a straightforward request based on customer feedback: "Customers are asking for a question-level interface. They need to track individual WAFR questions to claim credits."

My first step was competitive research. I looked at:

- nOps (direct competitor) — had a question-level interface

- AWS's native WAFR tool — had its own question tracking

Both validated the pattern. Customers expected question-level granularity because they'd seen it elsewhere. This wasn't about inventing something new — it was about building a missing capability and making it work well within our constraints.

I used both as reference for interaction patterns (segmented navigation, question sidebar, progress tracking). The differentiation would come from usability, not from the concept itself.

Design decisions

1. Interactive risk filtering

The existing summary showed counts like "6 High Risk, 12 Medium Risk" — but they were just labels. Static text.

I made them clickable. Click "6 High Risk" and the question list filters to show only high-risk items.

In post-launch observation with a small number of users, identifying critical issues dropped from 8-12 minutes to under a minute — the filter was doing the work that manual list-scanning used to require.

It wasn't a novel pattern — filter chips are standard. But in this context, connecting the summary directly to the question list removed a step users were doing manually (scanning the full list, counting severity levels, trying to remember which ones mattered).

2. Status icons vs. color dots

PM initially proposed colored dots to indicate question status — green for done, red for high risk, gray for unanswered. This was the pattern nOps used.

I pushed back with a specific argument: colored dots fail for color-blind users. A red dot and a green dot look identical to someone with red-green color blindness. That's roughly 8% of male users.

My alternative: semantic status icons — pending clock for unanswered, x-marks for high risk, exclamation for medium risk, checkmarks for none, dash for not applicable. Each icon communicates status through shape, not just color.

PM agreed immediately when I showed the accessibility argument.

3. Progress tracking

Added a progress bar showing "6/57 questions answered" at the pillar level.

This was critical for the credit claim workflow. AWS needs evidence of remediation progress. Without visible progress, MSPs couldn't demonstrate to AWS that they were actually working through the framework.

What didn't ship: Historical progress tracking (a timeline view showing WAFR scores improving over time). Scoped out due to the 14-day timeline. The data infrastructure for tracking changes across multiple WAFR runs didn't exist yet.

What we shipped

- Severity filter bar: Clickable status counts (All, Unanswered, High Risk, Medium Risk, None, Not Applicable)

- Pillar filter bar: Clickable pillars with question count (Operational Excellence, Security, Reliability, Performance Efficiency, Cost Optimization, Sustainability)

- Left sidebar: Question navigation (1-11) with status icons

- Main panel: Question details, best practice checklist, risk exposure, findings counts, notes field

- Side Drawer Info Panel: Open on click of 'i' button for each options, highlights the explanation of that option in the side drawer panel

Plus lens navigation tabs showing progress per AWS pillar (e.g., "Operational Excellence — 0/11").

Impact

Measured outcomes (6 months post-launch):

| Metric | Before | After |

|---|---|---|

| MSPs who claimed AWS credits | 0 | 16 |

| Time to identify high-risk issues | 8-12 min | ~1 min (observed, small sample) |

| Assessment completion rate | ~34% | ~87% |

| Support tickets (WAFR confusion) | 23/month avg | 3/month avg |

Attribution honesty:

The jump from 0 to 16 credit claims wasn't because of good UX polish — it was because the feature made the workflow possible at all. Before this, MSPs literally could not track questions through MontyCloud. They had to use spreadsheets or AWS's native tool.

The UX decisions (filtering, icons, navigation) made the tool usable and efficient. But the feature itself made credits claimable. I want to be clear about that distinction because it's easy to overattribute business outcomes to design work.

The completion rate increase (34% to 87%) is a clearer signal of UX impact — that's people finishing a process they previously abandoned, not a new capability.

Process

14-day sprint:

- Days 1-2: Competitive research, PM alignment, initial wireframes

- Days 3-5: Design iteration, engineering feasibility check

- Days 6-10: Detailed design, daily PM syncs, developer handoff

- Days 11-14: Implementation support, edge case handling, QA

What the daily PM syncs looked like:

Short (15-20 min). Mostly about scope management. "Can we add X?" followed by "What do we cut to fit?" The 14-day constraint was real — no one pretended otherwise.

What I'd do differently

Test with actual MSP customers, not just PM feedback. We validated through PM's understanding of customer needs, which was generally accurate but filtered. Direct usability testing with even 2-3 MSPs would have caught edge cases earlier.

Push for analytics instrumentation from day one. The "30 seconds" metric came from observing 3 users post-launch, not from proper instrumentation. I should have scoped analytics tracking into the initial sprint.

What I learned

Competitive research isn't admitting defeat. Looking at nOps and AWS's native tool gave us validated patterns and saved days of exploration. The value wasn't in inventing a new paradigm — it was in executing well within proven patterns.

Constraints force honesty. 14 days meant we couldn't hide behind complexity. Every decision had to justify itself immediately: "Does this help MSPs claim credits faster?" If no, it's out.

The PM relationship matters more than the PM disagreement. The status icons conversation is a good example. PM had a reasonable idea (colored dots work for most people). I had a better argument (accessibility). It resolved in 5 minutes because we had a working relationship, not because I "won" a debate.